Serverless Architectures I/III: Design and technical trade-offs

This is the first part of an article series about serverless architectures. Design and technical trade-offs are the focal point of this work. The second part covers business benefits and what drives adoption. The third part will compare major market offerings.

What is serverless?

Using computer resources as services without having to manage the underlying infrastructure. Noticeably, serverless does not mean that servers are no longer part of the equation. The term is coined from the developers point of view.

Key characteristics

Mike Roberts proposed the following acceptance criteria to define serverless:

- Server hosts or processes are not managed by the client/developer (high level abstractions)

- Elasticity means auto-scale and auto-provisioning based on request load

- Usage based pricing depending on each vendor offering

- Server size and number do not define the performance

- High availability and fault tolerant by default

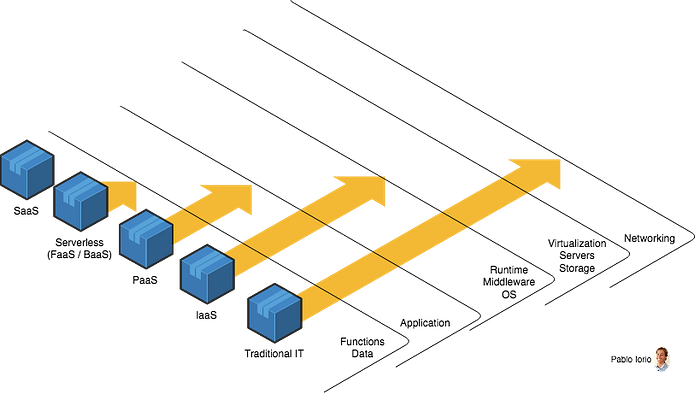

Serverless = FaaS + BaaS

Once the stated rationale is assessed, it can be concluded that serverless includes not only functions but also back-end as a service (e.g. cloud storage, authentication, database services, etc.)

Furthermore, I would like to offer a comparison between different as-a-Service models and traditional IT, where the whole stack is hosted on-premise. The longer the arrow, the more management is required by and/or on behalf of the client.

Advantages of serverless architectures

- Pay for what you use. You are charged for the total number of requests across all your functions and usually you will enjoy a free tier. This brings great benefits from cost analysis point of view. However, IMHO it appears vendors are using this almost free service as a way to acquire new customers. Portability and migration costs of the functions, surrounding vendor services (e.g. API gateway, CDN, load balancers, etc.) and data need to be considered when performing a cost/benefit analysis.

- Built-in elasticity. The whole architecture scales up and down automatically by provisioning and de-provisioning resources in an autonomic manner. On demand execution based on units of consumption.

- Productivity. On one hand, this type of architecture provides a high speed development at the beginning when the number of functions is limited because you do not need to care about the initial infrastructure setup. On the other hand, it can become harder when the number of functions increase from testing and debugging perspective. One option to have more control and increase efficiency is to use runtime development emulators. Although they are similar, they not the same as Production environment so you may run into the problem of a functionality working in an emulated environment but not in Production.

- Unit testing each function is easier to test since each function should follow the single responsibility principle hence the testing surface is reduced to a minimum. However, integration testing can be more challenging.

- Though high level abstractions let us focus on business domain complexities, there is an inherent control loss. This is a classic trade-off in Software Engineering.

- Event driven model. Event driven architectures have been around for long time. Serverless is perfect fit for this type of architectures. Functions can also be used to extend capabilities of a basic offering using event-driven “hooks”. For instance, a function can be developed to process an OAuth authentication failure. The open closed principle ensures we have well-defined interfaces to be able to extend a solution without increasing the testing surface.

- Microservices. Using functions facilitates the development of a microservices architectural pattern since it is easier to develop discrete and separately deployable components. Of course, on the other hand by incrementing the number of building blocks, it increases maintenance, complexity, network latency and monitoring. This article Modules vs microservices describes the operational complexity of microservices.

- Immutability. New functions are created and new versions allocated. The old one is not meant to be modified and redeployed since this would generate instability on clients depending on the old version of the function. Recommendation is to monitor usage and discard it, after no one is consuming it.

- Isolation and security. Each function can only access its own container space. If a function runtime gets compromised, it won’t be able to access other functions (containers)

- Easier creation of new environments. Creation of new environments becomes easier when multiple streams of work are running in parallel since the creation of all components can be automated

- Support for polyglot teams. Variety of programming languages. Functions can execute in Javascript, Python, Go, any JVM language (Java, Clojure, Scala, etc.), or any .NET language among others and depending on the vendor. This allows to better fit the skill-set available in your team and the requirements to be implemented

- Inherently stateless. Statelessness means that every request happens in complete isolation and no state is kept between requests. This is beneficial because allows elasticity without having to worry about state and also reduces complexity. There are also stateful offerings in the market such as Azure durable functions or AWS step functions covering orchestrator functions and enabling the definition of more complex workflows.

- High availability and fault tolerant by default. For instance, the SLA for AWS Lambda mentions a Monthly Uptime Percentage for each AWS region, during any monthly billing cycle, of at least 99.95%.

Disadvantages and challenges

- Hybrid approaches using cloud and on-premise need to be thought carefully since all serverless building blocks will be elastic by default meanwhile on-premise components generally are not. This will require some throttling mechanism between them. This item is sometimes overlooked and could have downtime impact.

- Systemic complexity. On one hand, some parts of the system got simpler from the developer point of view. On the other hand, complexity is higher due to more orchestration between functions and services, message schemas, more APIs, network failures and so on. Good monitoring, operational intelligence and deployment automation are required to be able to maintain and debug the whole system.

- Lock-in. A reduction in portability. Limitation of choices once a vendor is selected. FaaS frameworks abstracting vendor services can increase portability, migration costs still need to be considered. In most of the cases, migration cost does not come from the migration of code functions to another vendor, it is originated on the surrounding services complementing the solution.

- Resource limitations. Functions have limitations in regards to memory allocation, timeout, payload sizes, deployment sizes, concurrent executions, etc. These boundaries are consistent with the serverless philosophy, for instance it makes sense that a small function with a single responsibility should run in a short time with low memory allocation and deployable be small. As a result, if you are looking for long running processes with high memory footprint, you should consider a different architecture.

- Integration testing is hard. Once you get to a certain level of functions and orchestration is required, then integration testing becomes challenging. This happens when you are not in control of all other services surrounding the solution and you need to start making decisions around stubbing other services and also whether the vendor offers some capabilities for this or you need to build it yourself.

- Debugging can be quite challenging. Replicating the cloud environment in your local machine is difficult. And attempting this should not be the case, cloud vendors should provide options to debug within the cloud environment running Production-like replicas. Let’s see how debugging evolves in the next years.

- Observability includes monitoring, alerts, log aggregation and distributed system tracing. Monitoring and alerts of each service is included in vendors services. However, achieving log aggregation and distributed system tracing implies more effort, specially if you are using external services and you want/need to have an aggregated view. This happens because you cannot use some of the usual patterns to achieve these; background processing is not feasible, same as having agents or deamons running in the functions. There is great work being done in this area, so I expect this situation will improve.

- Cold start problem (high latency). When a function is triggered by an event, if it has not been used for some time; it requires to initialize a container instance. This causes a delay between function being requested and actually executed; this is called “cold start”. It will depend on several factors such as programming language, number of lines to be executed, dependent libraries to be loaded and other related environmental setups.

- Over ambitious API Gateways. One of the ThoughtWorks Radar platform concerns that describes the situation in which this gateway layer accumulates too much business logic and it is aware of the orchestration required between different functions/services. As a consequence, it becomes challenging to test and portability is reduced.

Conclusion

We are fortunate we live in an era full of different technologies and architectures to choose from, even though the paradox of choice could impose challenges due to the sheer volume of alternatives.

Serverless empowers you to reach perfect resource elasticity within certain conditions, however it comes with trade-offs such as abstractions over control loss. In the next one, I will cover business benefits and different use cases where it makes sense to select serverless technologies.

References

[1] Serverless architectures by Mike Roberts (MartinFowler.com)

[2] Serverless Cloud with Guillermo Rauch (Software Engineering Daily podcast)

[3] Serverless: It’s much much more than FaaS by Paul Johnston

[4] Serverless is cheaper, not simpler by Dmitri Zimine

[5] GOTO 2018 Serverless Beyond the Hype by Alex Ellis

[6] GOTO 2017 Confusion In The Land Of The Serverless by Sam Newman

[7] Elasticity does not equal Scalability by Pablo Iorio

[8] How to Test Serverless Applications by Eslam Hefnawy

[9] The present and future of serverless observability by Yan Cui

[ I. Design and technical trade-offs ] [ II. Business benefits ] [ III. Market offerings ]

📝 Read this story later in Journal.

👩💻 Wake up every Sunday morning to the week’s most noteworthy stories in Tech waiting in your inbox. Read the Noteworthy in Tech newsletter.